This is the second part in a series of how we’ve learned to improve the quality of the GameChanger app. In part 1, I talked about enforcing release criteria that are based primarily on quality and not time.

A great way to reduce the risk of a release process is to reduce batch size. Batch size is the size of work that moves through the development pipeline. For instance, if you were to write 10 lines of code, then test it, and then ship it, you’d have shipped a 10-line batch of code.

Though it’s counterintuitive, it’s usually more efficient and less risky to ship several small batches than a few large ones. As Fred Wilson writes, “Big changes create big problems. Little changes create little problems.” Eric Ries has a good post on the topic. You should read every word of that post.

How we work in small batches

It’s hard to keep batch sizes small with mobile apps. The App Store review process takes days, and so we have to ship multi-day batches of work to Apple. Even so, we can still operate more efficiently by keeping batches small through the rest of our process.

Small code commits

Big commits are risky and annoying. It’s basically impossible to review a huge diff carefully. Testing a big change is hard and time consuming. And big commits have more merge conflicts. It’s unusual for our 4 person iOS team to have fewer than 10 commits on a given day.

Test in small batches

We test things as soon as we build them, and we immediately fix the bugs that we find. We don’t let all the testing work pile up until just before we release. It’s more efficient for us to fix bugs while the code is still fresh in our minds. We also gain flexibility. Our codebase is always near a shippable state. If our priorities change or if our schedule slips, we can ship what we’ve built, tested, and polished. Then we can replan.

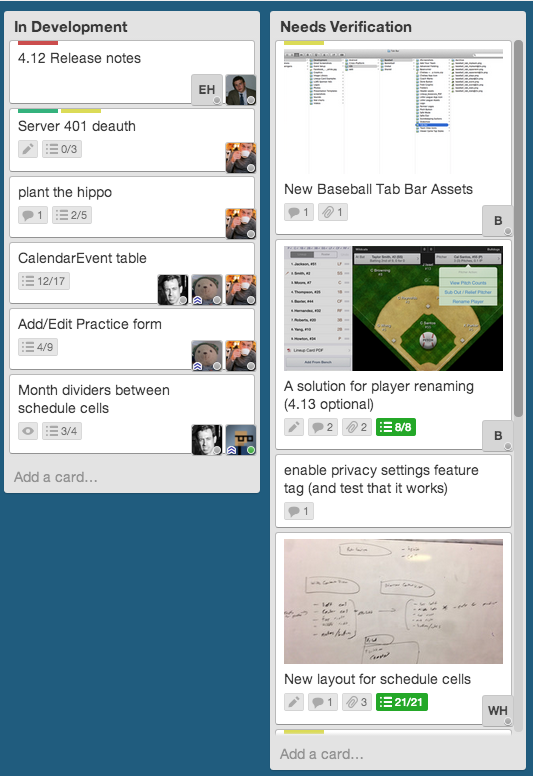

Our Trello board shows our testing progress. If we fall behind on testing, then we can bring in reinforcements from the marketing and customer service teams.

Frequent regression tests

Our customer service team manually regression tests our app three times a week. As we improve our tools and automation, we expect to be able to do a full set of regression tests at least once daily.

Prioritize unit and functional testing

We favor unit and functional automated tests over end-to-end automated tests. By emphasizing unit and functional tests, we force ourselves to design software in a way that can be verified in small batches. When we are testing small batches, we can be more thorough and we reduce the risk that a bad bug will get to customers.

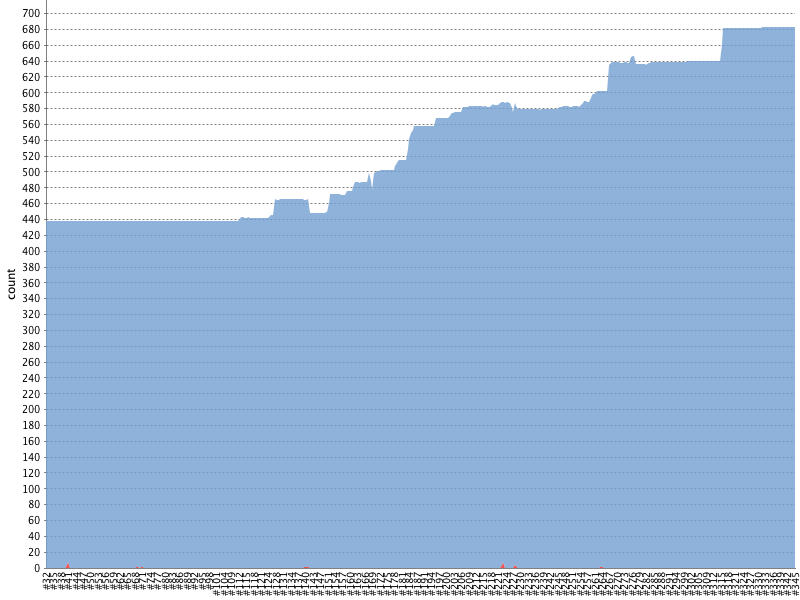

This year, we’ve increased our unit and functional test count by a factor of 15. As the graph below shows, the test count is steadily increasing.

Use pre-release builds internally

We do marketing demos and usability tests against pre-release builds. We kick off a pre-release build after each commit that passes unit and functional tests, and we push those builds to HockeyApp. This policy is another way of reducing our testing batch size.

Everyone benefits. The marketing team gets to show off features that aren’t publicly released. Engineers, meanwhile, get early feedback on problems with recently checked in code.

An obsession with testing

The hardest part of working in small batches has been discovering problems quickly. That’s why most of this post talks about testing our app. The four approaches here — immediate feature testing, regular regression testing, automated unit/functional tests, and regular use of pre-release code — have helped us lower batch size. And smaller batch sizes reduce our risk and give us more options when things go wrong.

There’s lots of room for us to improve. Much of our testing process, for instance, is still manual. In the last part of this series, I’ll talk about how we’re laying the ground work to do a better job in the future.